The service went down. Monitoring was silent. The client messaged first.

I went looking - and found a ghost, a retired colleague, and twelve layers of backups inside the folder they were backing up.

Somebody was very afraid of losing a file. And not at all afraid of taking down production.

Three months later I found out why the system worked at all. It shouldn’t have.

Prologue

“It was working” - the most dangerous phrase in infrastructure.

Gluu had been running in AWS for years. Certificates renewed. Users logged in. Three critical applications hung off it. Nobody looked inside - no reason to.

I got access during onboarding, in a bundle with a dozen other accounts. Why - nobody said. I didn’t ask.

Act I: The Client Messaged

Early morning. First coffee. Slack open, not expecting anything.

The client messaged: Gluu is down.

Not a monitoring alert. Not an automated notification. A person typed in chat. Which means: everything that should have fired before them - didn’t.

Gluu itself was alive. But its certificate had expired. And the system responsible for renewing it - a t2.micro instance - existed in a zombie state. Running, but unreachable. No SSH, no SSM, no console. Just hanging there.

Then I discovered the first consequence of bad onboarding.

The SSH key for that instance was never handed over to me. Nobody mentioned it existed. Nobody said where it was stored. The instance tags read owner = company - but the key wasn’t in any documentation, wasn’t in any secrets manager. I had to ping colleagues and management one by one. That took time. The certificate stayed expired.

The key was found. I still couldn’t connect - the instance was not quite dead, but definitely not alive.

Took a snapshot. Launched a new instance from it - with my own key and SSM agent configured. Connected. Ran the cron job manually. Certificate renewed. Gluu came back up. By the time it did, it was dark outside.

Problem solved. And now - while things were quiet - I decided to look at exactly what I’d been pressing.

A t2.micro. A dedicated virtual machine, running 24/7. One job: run a cron job once a day that would check and renew one certificate. Certbot, on its own instance. Paid for every month.

A dedicated server. Around the clock. For one task per quarter.

Candidate for replacement.

I’d solved a similar problem before - Let’s Encrypt in AWS China, where ACM certificates aren’t available. The key design decision there was the same one I wanted here: no dedicated instance. A Lambda function that triggers on a schedule, does its job, and shuts down. No idle cost, no server to babysit. I took that architecture and rewrote it for this situation: EventBridge triggers a check once a day, DynamoDB stores the domain → certificate ARN mapping, if a cert is expiring - a Lambda gets a new one from Let’s Encrypt, imports it into ACM, puts it in S3.

Overkill for one certificate? Yes. But transparent, scalable, and no permanent instance required.

Calendar reminder: check in 60 days when the renewal is due.

Seems to work.

Act II: 60 Days Later - The Ghost

The reminder fired. Time to check.

Certificate in ACM - updated. In S3 - updated. ALB is using the new one.

Gluu is using the old one.

Checked the documentation - found an instruction: “do step one, do step two, get result.” Why - not written. What for - not written.

Went to look at the Lambda that was supposed to deliver the updated certificate to Gluu. Found the address - it was connecting via SSH to tls_renew_instance. That instance. The one I’d shut down two months ago.

The new architecture was issuing certificates correctly. Then handing them to a ghost.

Good thing I hadn’t deleted that snapshot instance - the one I’d spun up for the quick fix. Turned it back on. Found the script. Opened it.

The chain:

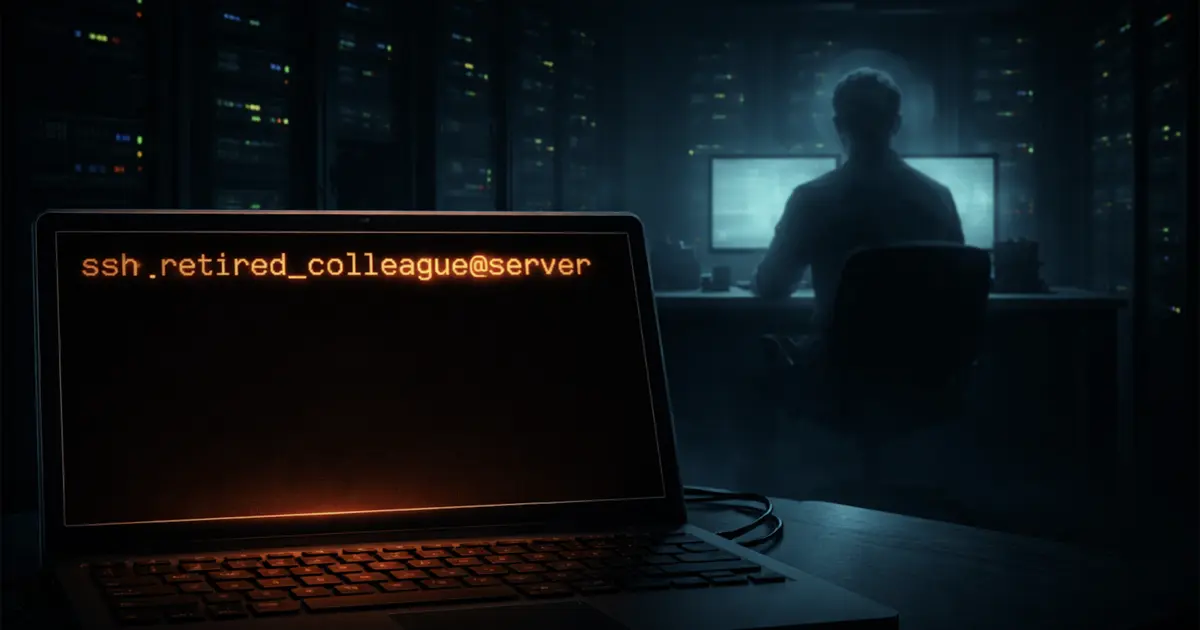

s3 event → lambda → ssh retired_colleague@tls_renew → ssh retired_colleague@gluu → sudo gluu.service → renew.sh

retired_colleague.

A user who’d left the company a long time ago. Their home directory on the server - still there. Their SSH keys - still valid. Their script - still running every 60 days.

I opened renew.sh.

Twelve backup operations before the script did anything useful. The backups were written into the same directory they were backing up. Error checks: zero. Result verification: zero. Every 60 days, /etc/certs/ grew twelve new layers, like rings on a tree.

Somebody was very afraid of losing a file. And not at all afraid of taking down production.

Fixed the chain - removed the intermediate hop, Lambda now goes directly to Gluu. Replaced the SSH keys. Fixed the backup paths. Ran the script - didn’t work. Checked the logs, verified permissions, confirmed all files were in place. Ran it again - certificate updated in Gluu.

Set another reminder. 60 days.

Seems to work. More or less.

Act III: Java Keeps Secrets

The reminder fired.

Certificate in ACM - fresh. In S3 - fresh. In Gluu - old. Again.

This time I didn’t patch the surface.

Traced the chain to the end: s3 → lambda → gluu → script → local copy. The local copy was correct. The fresh certificate was sitting on disk. Gluu wasn’t seeing it.

Went into the script with a flashlight.

Apache - PID file not cleared on restart. Fixed. Gluu still doesn’t trust the certificate.

Self-signed cert - renewing correctly.

File permissions - clean.

Fullchain assembled correctly.keytool import - completed.

The server insists it did everything right. And doesn’t work.

Added delays between service starts. Added checks that each process actually came up. Ran it.

Doesn’t work.

Got up. Got water. Sat back down.

Opened the logs. Kilometers of Java stacktrace, written for other Java programs, not for humans. Somewhere in there - one line that mattered:

PKIX path validation failed: CertPathValidatorException: timestamp check failed

Java thought the certificate was expired. I knew I’d imported a fresh one into the keystore. The right keystore, into all three aliases - external_idp, oxauth_ssl, gluu_openldap - I still don’t know why there are three, but I updated all of them to be safe.

keytool said the import worked. Java said the certificate was old. Cache? Load order? I didn’t know exactly. But I knew the answer was in the logs.

I read them again. Slowly. Not looking for the error - the error was clear. Looking for the order. The sequence. Who started first, who started last.

And I saw it - not the answer. A thread. But it was there.

The services were starting simultaneously. One of them - slower than the rest. While it was coming up and reconnecting to the keystore, the others were already running. In the old script - chaotic startup, no delays. That was a bug. But that bug had a useful side effect: the slow service would catch the fresh certificate during its reconnect, because by then the others had already released the file handle.

I made the startup ordered. Deterministic. Correct.

The second bug stopped compensating for the first. The system broke.

Classic.

Downtime was unavoidable. I tried to avoid it.

What was needed: stop everything → clean caches and PID files → load the new certificate → start services in the right order with delays → verify each one actually came up.

I rewrote the script from scratch. A check at every step. Logging. A wait after each service start. A count of Java processes - exactly four. A test of the OAuth endpoint.

And a separate debug script. And documentation - not “do step one, do step two,” but with an explanation of what, why, and why exactly this way.

The Final Run

Scheduled the maintenance window in advance. Off-hours. Client notified.

Didn’t wait for EventBridge’s schedule - triggered the chain manually. The system was supposed to handle itself. But I wanted to watch.

Ventilator: domain found, certificate expiring. Issuer triggered.

Issuer: Let’s Encrypt responded. Certificate obtained. Imported into ACM. Pushed to S3.

S3 event: Lambda triggered. SSH into Gluu.

Script: stop. PID file cleanup. Import into keystore. Start in the right order. Wait. Java processes - 4/4.

OAuth endpoint check: HTTP 400.

HTTP 400 with no token means working. By design.

Everything ran automatically. First try.

Now every 60 days the system renews the certificate on its own. A separate monitor checks the expiration date - if there are fewer than 14 days left, an alert fires. Not a success email you stop noticing after six months. A signal that something needs attention.

Gluu 3.1.8 is still running. Can’t upgrade - the license changed, and the breaking changes are large enough that it’s not an upgrade, it’s a fresh install with manual migration. The client is planning to move to a different solution. Someday.

But right now the system is no longer a mystery. There’s a runbook - what to do if it breaks. There’s a full write-up - how it works and why exactly that way. There’s a debug script. The next engineer won’t have to start from zero.

It works.

Now I know why.